An interaction-profile abstraction that treats robot behavior as reusable software rather than hidden deployment tuning.

Research / local preprint / May 2026

BEHAVIOR LAYER PREPRINT

Toward a Behavior Layer for Robots: Reusable Interaction Profiles, Human Service References, and Cross-Task Proof on a Humanoid Platform.

Abstract

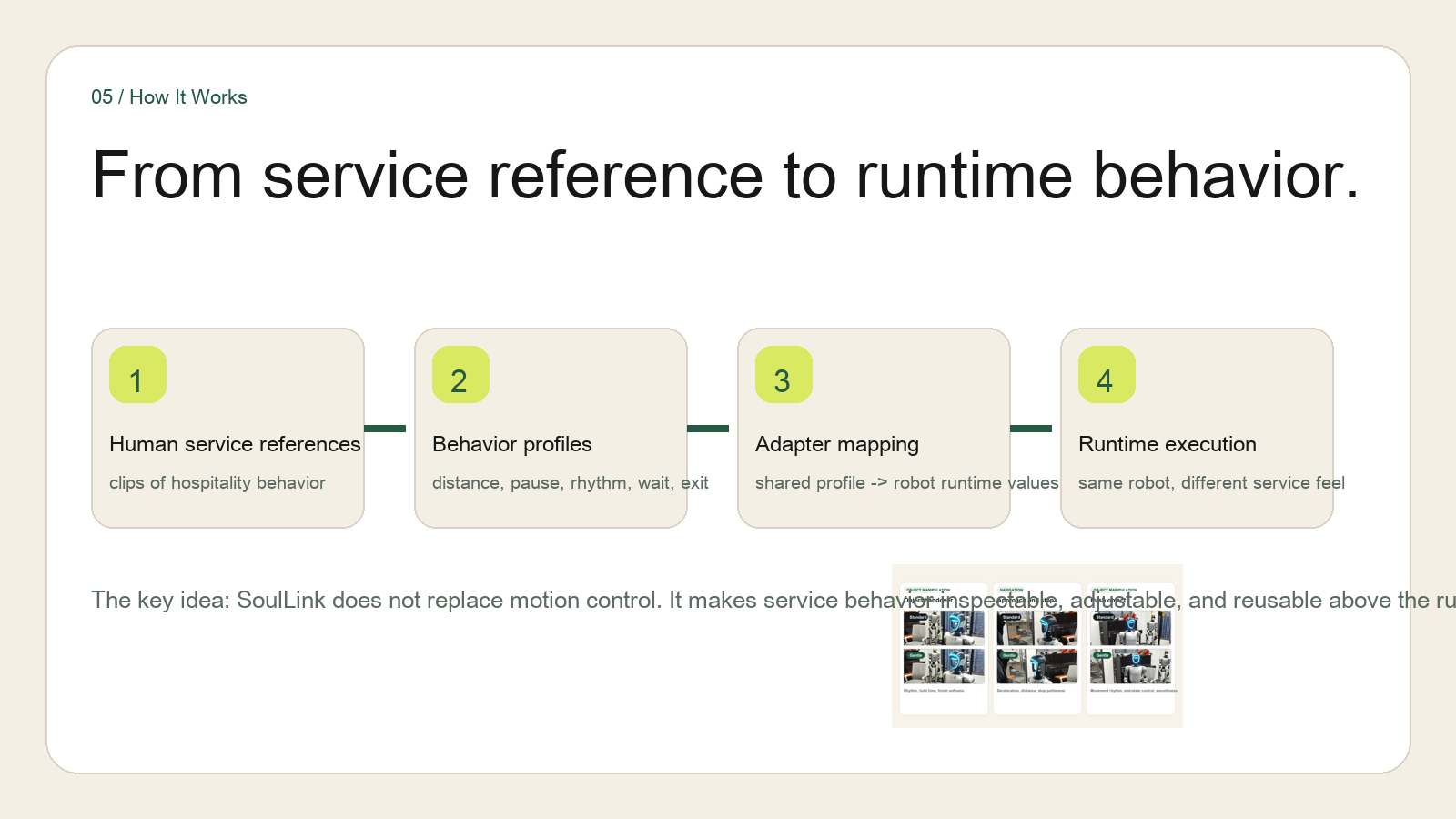

A narrow but explicit systems claim.

Robots are improving quickly at navigation, manipulation, and autonomy, but human-facing behavior is still rarely packaged as reusable software. This preprint proposes a behavior-layer formulation in which interaction style is represented explicitly through reusable profiles that resolve into execution fields such as speed scaling, pause timing, stopping distance, handover cadence, and end-effector smoothing. The current prototype study keeps the same humanoid robot, filming environment, and task framing fixed while varying only the active profile across object handover, approach-and-stop, and push object.

Why this page exists

We are showing the preprint locally before the public paper link is finalized.

This page is the cleanest way to present the title, abstract, core claims, and source files now, without depending on an external preprint host being live yet.

Core contributions

What the paper actually claims.

The current draft is not a general robot-intelligence claim. It is a systems paper about making behavior explicit, reusable, and inspectable above the runtime.

An adapter-based runtime boundary that maps behavior fields into robot-specific execution values without replacing low-level control or safety.

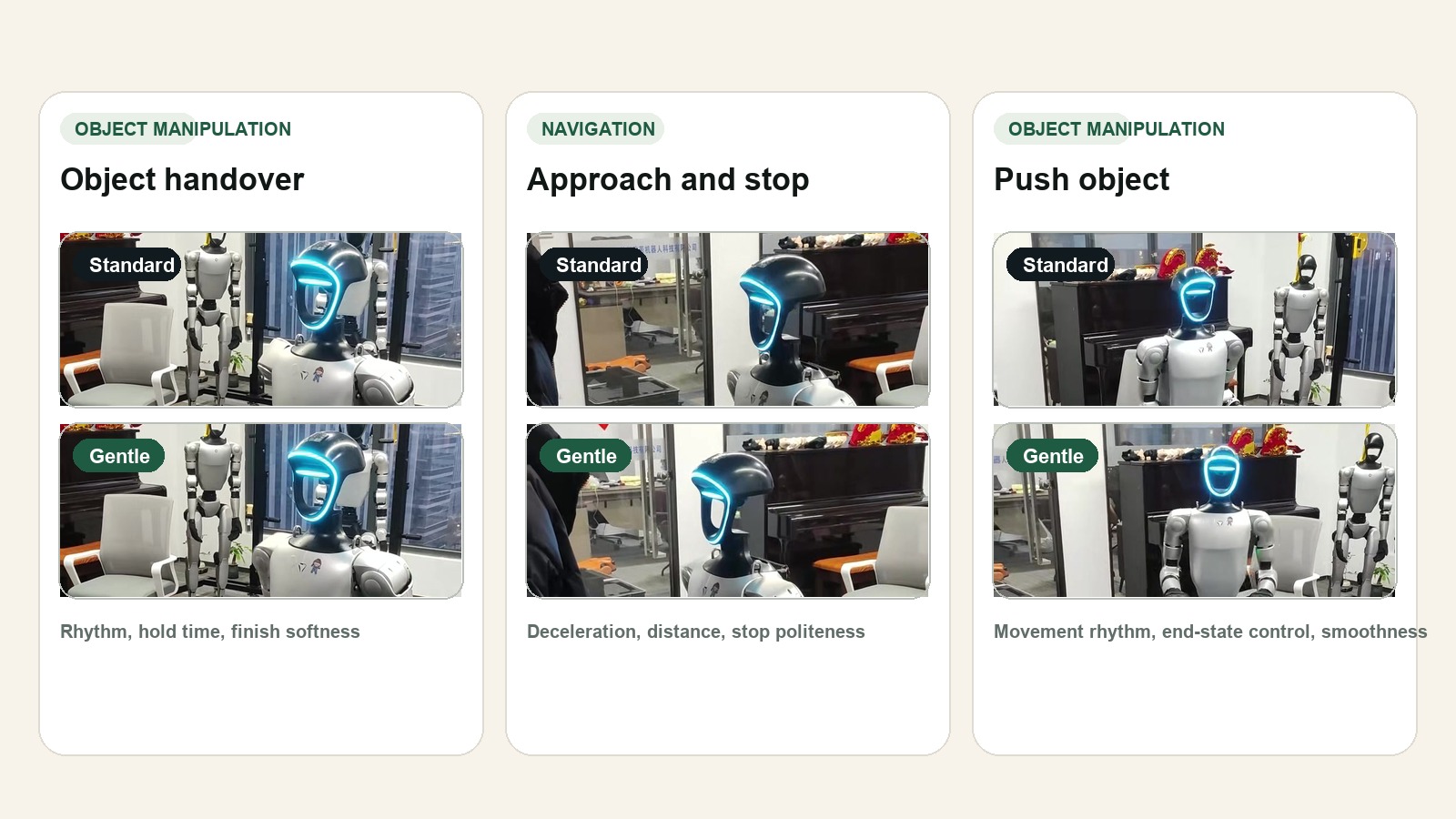

A controlled proof setup showing one profile change remaining visible across object handover, approach-and-stop, and push object on the same humanoid platform.

A reference-and-validation loop that connects human behavior references, structured labels, and robot proof clips as a path toward future preference-aware evaluation.

Experimental protocol

Three research questions and one controlled proof setup.

The current study is structured around explicit research questions, a fixed humanoid platform, and three visible interaction comparisons rather than a broad product demo.

RQ1

Can explicit profile fields create visible cross-task behavior differences on the same humanoid platform?

RQ2

Can those differences be surfaced without replacing the robot's controller or autonomy stack?

RQ3

Does an explicit profile surface provide a credible basis for future human-reference and preference-based evaluation?

Current evidence

The proof stays on one humanoid robot and spreads across multiple interaction types.

The current edited proof clip covers object handover, approach-and-stop, and push object while holding the robot body, filming environment, and task family fixed.

Preliminary results

What already becomes legible at prototype stage.

The current paper does not claim benchmark completeness. It reports explicit parameter deltas, cross-task visibility, and a preserved controller boundary.

Explicit surfaced deltas

The current Standard and Gentle comparison already exposes measurable field differences: 18.0% lower speed scale, 110.0% longer pause duration, 18.7% wider stopping distance, and 6.7% more end-effector smoothing.

Cross-task visibility

The profile difference is not limited to one hero shot. It remains visible across multiple interactions spanning both navigation-related and object-interaction-related behavior.

Controller boundary preserved

The current claim is narrow on purpose. The behavior layer sits above the runtime and does not replace planning, actuation, or hard safety limits.

Limitations

What the paper does not overclaim.

We keep the scope narrow: no multi-robot transfer result yet, no human study yet, and no finished benchmark release.

Single robot and single proof asset.

No formal human preference study yet.

No cross-platform adapter evaluation yet.

Reference network described as architecture and workflow, not a released benchmark dataset.

Files

Open the source package directly.

The local research page is the readable layer. The TeX source and arXiv submission package are available directly for review and upload.